How I Approach Analytic Thinking

The work here starts from a simple observation: most analytic problems aren’t caused by bad data — they’re caused by framing the problem incorrectly. Teams struggle when the wrong construct is measured, when different types of error get blended together, or when methods are applied outside the purpose they were designed for.

These essays explore those upstream issues. They clarify constructs, correct category errors, and examine the systems behind demand, forecasting, and decision‑making. The goal is not more sophistication, but better thinking — the kind that leads to cleaner analytics and stronger decisions.

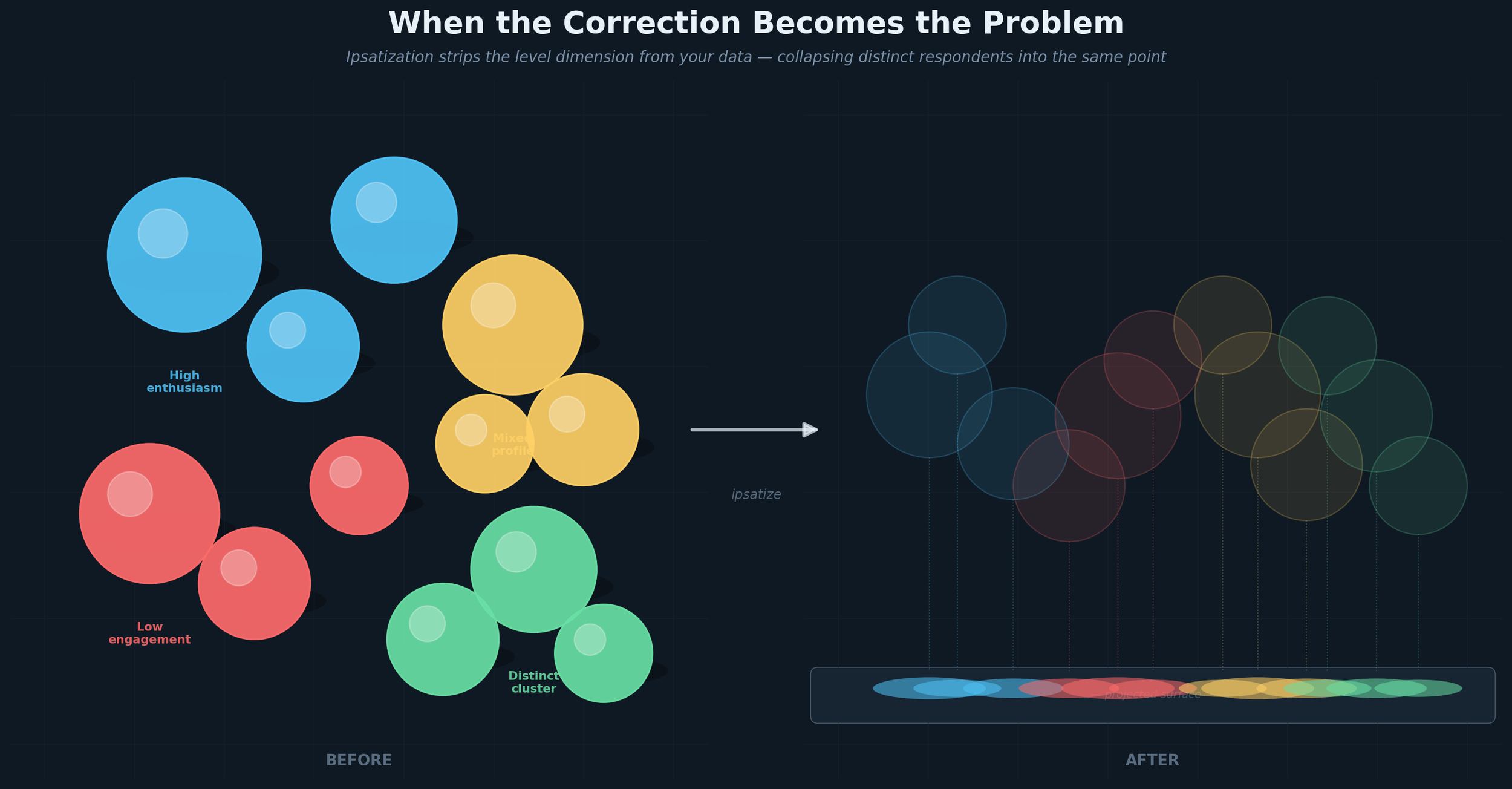

When the Correction Becomes the Problem

Mean-centering survey data across markets sounds like the right correction for cross-national scale bias. For segmentation, it is categorically the wrong one — it collapses genuinely different respondents into the same point in space and destroys the signal your clustering algorithm needs to find.

The geometry of why this happens, what to do instead, and why latent methods handle this more gracefully than distance-based approaches — all are addressed in this article.

Choice Modeling in Pharmaceutical Marketing: A Precise Tool, Imprecisely Applied

Choice modeling has been the de facto tool for demand estimation in pharma for decades. Most brand teams have used it. Most research agencies have run it.

And yet it is applied on autopilot more often than it should be — commissioned because it is the expected next step rather than because someone has thought carefully about whether the design is right, whether the scenarios are realistic, and whether it is even the right tool for the question being asked.

I first wrote on this topic in 2008. My views haven't changed much — but I think they are more precisely stated now than they were. The one area where my thinking has genuinely evolved is the multi-stakeholder architecture, where I suspect the field has sometimes added complexity without a proportionate gain in usefulness.

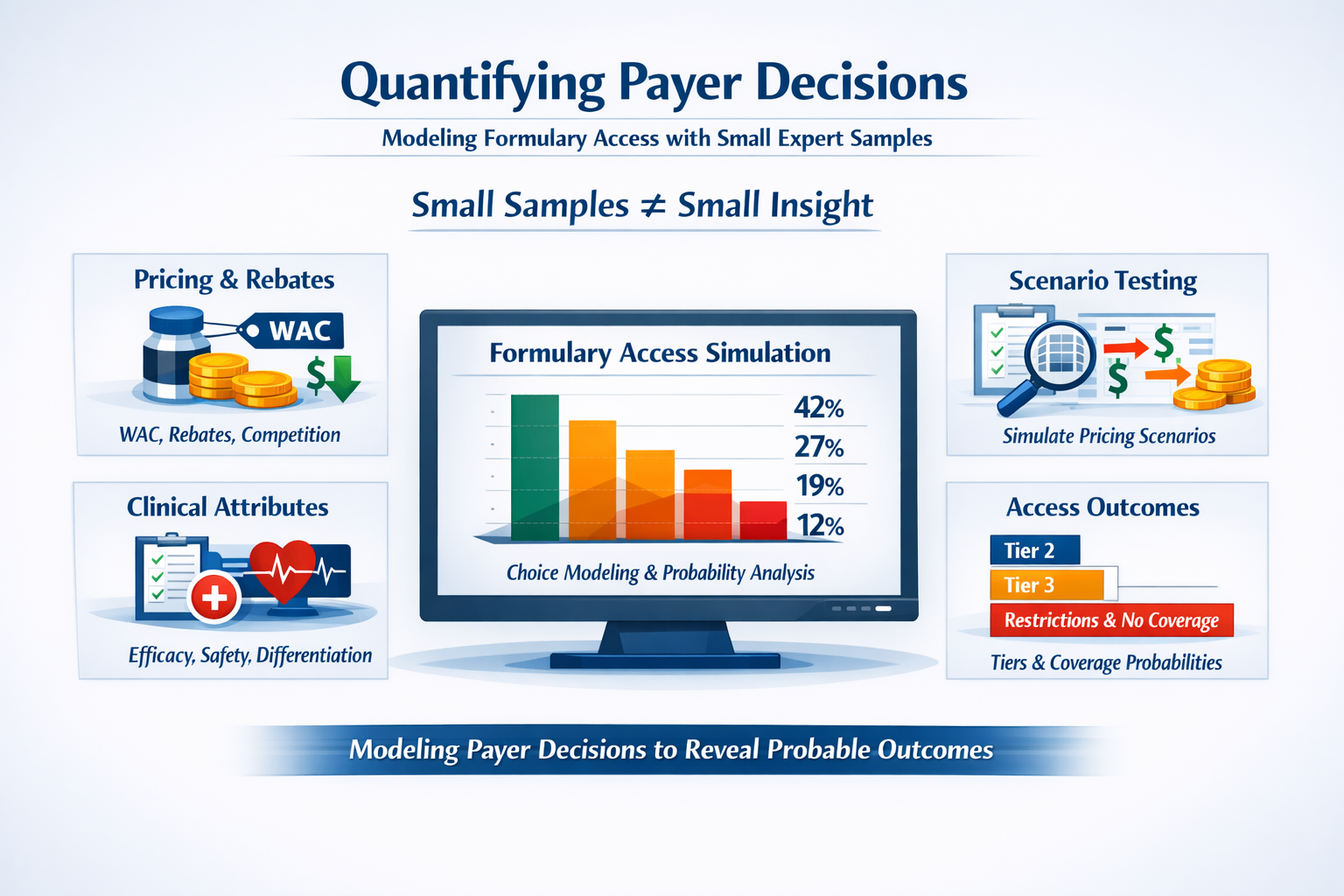

Quantifying Payer Decisions: A Structured Approach to a “Small Sample” World

Small samples don't hinder our ability to quantify payer decisions.

This is because Payers aren't making random choices. They operate inside a structured system with clear financial incentives and well-understood levers. When the decision environment is that structured, you don't need hundreds of respondents to detect a signal. You need a disciplined design.

Ordered choice modeling treats formulary outcomes such as tier placement, restrictions, coverage as what they actually are: a natural hierarchy. The result is a probabilistic model whose estimated coefficients become the engine for "what if" scenario simulators that access teams can use to pressure-test pricing, rebates, and contracting strategy before going to market

Two Lenses on the Same Data: What Chart Audits Can — and Can’t — Tell Us

Chart audits feel incredibly concrete because they’re built from real patient charts, and yet we still run into discrepancies with secondary data that are hard to explain to a client. The reason is simple once you see it — the way we collect chart data quietly shapes the story it tells. And the same is true for claims. Neither one is “the truth.” They’re just different windows into a market we can’t observe directly.

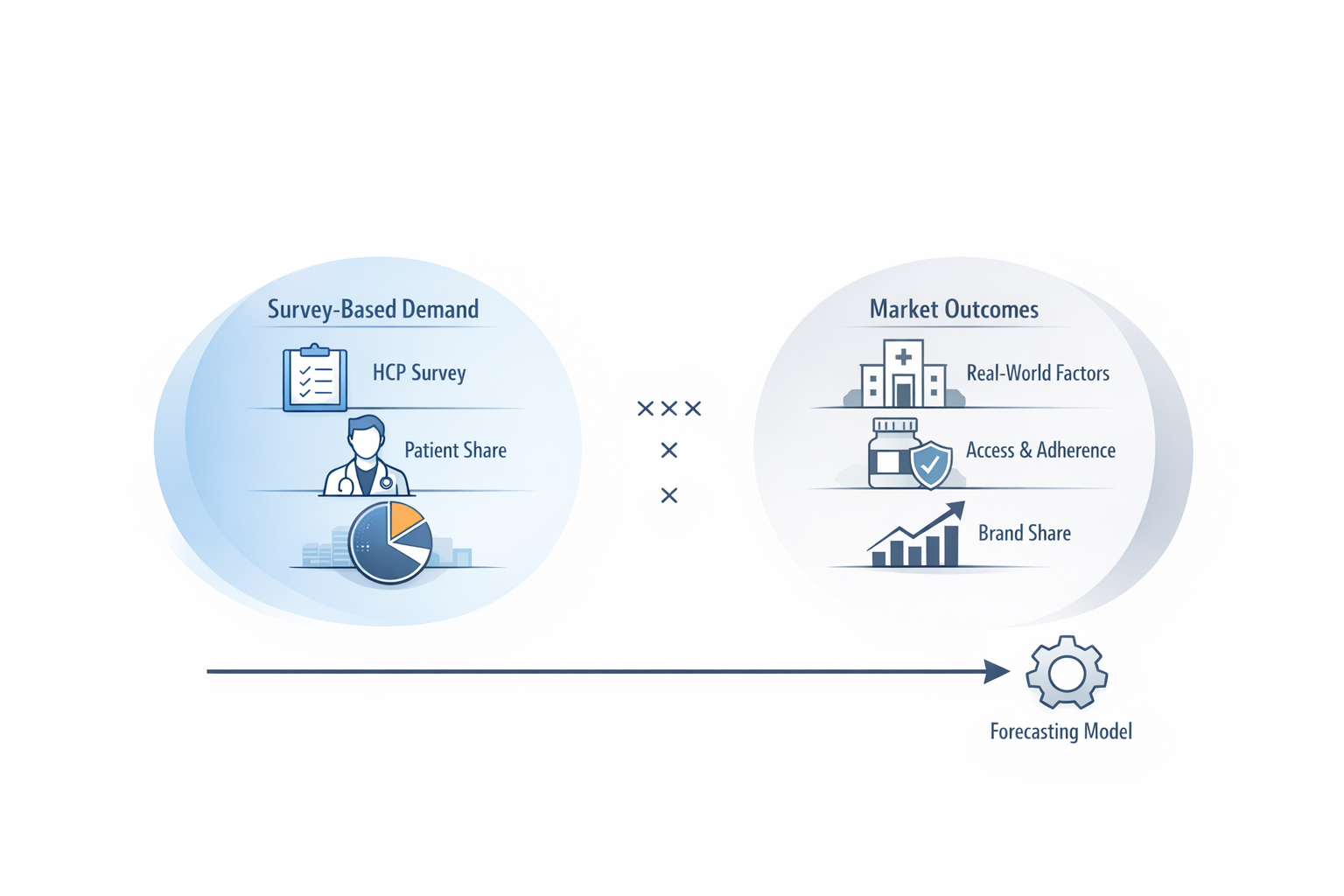

Can We Stop Trying to Validate Patient Share Against Market Share?

We ask surveys to do things they were never built for. One example: trying to “validate” patient share against market share. It sounds reasonable, but the two measures come from different worlds and behave differently.

I wrote a short piece on what survey‑based demand actually measures, why calibration creates confusion, and how to think about validity in a way that’s more useful for real decision‑making.

If you work with HCP demand data—on either the insights or forecasting side—I think you’ll find it useful.

Demand Assessment with HCP and Patient Samples: A 2026 Perspective

Demand assessment has always been a balance between clinical realism and the need to aggregate it into a credible market forecast. When I first wrote on this topic in 2007 the industry was wrestling with how to incorporate patient-level nuance without collapsing the ability to forecast at the market level. That tension hasn’t gone away — if anything, it has intensified as clinical complexity, data fragmentation, and therapeutic personalization have increased. In this article I articulate a principle that still guides my work today: methodological realism is only valuable when it preserves — rather than obscures — our ability to make reliable market-level predictions.

What Makes For a Good Methodologist?

What sets a methodologist apart from the rest?

In this article, I share some thoughts on what makes a methodologist truly valuable to insights professionals and decision-makers.